“It Feels Like We Are on Our Own”: How Black LGBTQ+ Creators Navigate Algorithmic Bias on TikTok

Role: Lead Researcher

Overview/Problem Statement

On TikTok, where influencers and trends often seem to skyrocket overnight, Black LGBTQ+ creators say they face invisible barriers—algorithms that systematically suppress their content, reducing visibility and engagement. These creators find themselves working within an ecosystem that claims inclusivity, but whose underlying algorithms seem to penalize them for their identities.

My research sought to uncover and understand these hidden biases. By examining how Black LGBTQ+ creators navigate TikTok's algorithms, I aimed to reveal how algorithmic bias can affect marginalized communities, and explore how these creators resist, adapt, and reclaim their visibility in a space that is often stacked against them.

Why This Matters: As algorithms increasingly shape what content we see online, marginalized creators find their voices silenced by these systems. We need to understand the effects of algorithmic bias to build more inclusive platforms and ensure that marginalized creators are not left behind in the digital space.

Impact & Recommendations

-

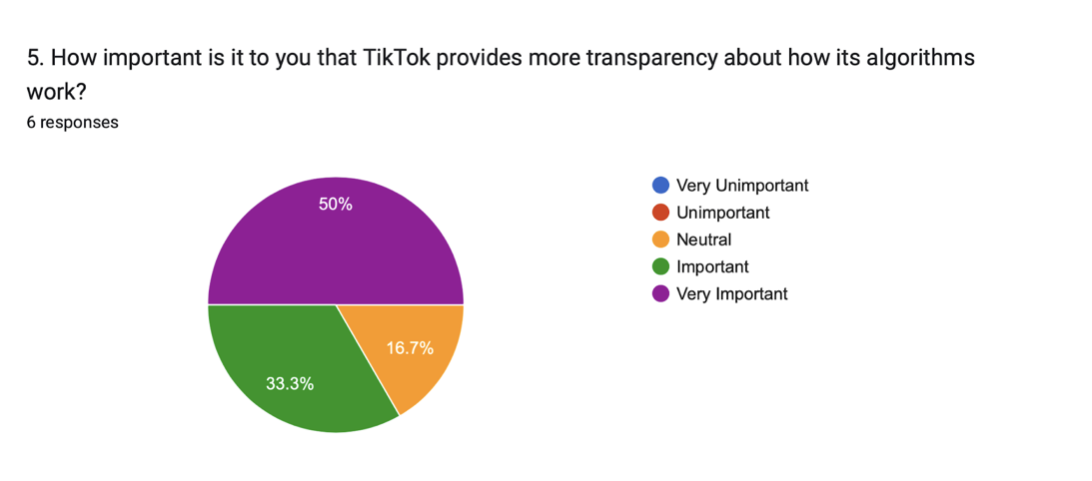

TikTok should implement transparent guidelines and algorithmic transparency measures, allowing creators to understand how their content is being evaluated and promoted—or suppressed.

Without transparency, creators are left to guess why their content is being suppressed, which fuels frustration and distrust. Clear guidelines would help rebuild trust and allow creators to focus on producing content rather than navigating algorithmic roadblocks.

-

TikTok should highlight content from marginalized creators during significant cultural moments, such as Pride Month and Black History Month to ensure more authentic representation and combat biases.

Representation matters, especially in digital spaces. Platforms should have mechanisms in place to ensure that marginalized creators' voices are amplified rather than silenced by the algorithm.

-

TikTok should develop community-building features, such as discussion forums or collaborative initiatives that elevate marginalized voices so creators can band together and amplify each other’s work or provide mutual support.

Building community solidarity is a powerful way for marginalized creators to resist systemic bias and ensure that their voices are heard, even when the algorithms are working against them.

"Every time I try to post something that truly represents me, it feels like it’s suppressed right away.”

Interview 3; August 6, 2024

Research Process

Given the complexity of algorithmic bias and its intersection with marginalized identities, I adopted a mixed-methods approach that combined both qualitative and quantitative data. This allowed me to capture both the personal experiences of individual creators as well as broader patterns of algorithmic suppression. The combination of semi-structured interviews and a survey allowed exploration of the emotional impact and the data-driven trends in how Black LGBTQ+ creators interact with TikTok's algorithms.

Research Focus

-

-

-

Investigate the nature and impact of algorithmic biases specifically affecting Black LGBTQ+ communities on TikTok.

Explore the adaptive content strategies developed by Black LGBTQ+ creators in response to these biases.

Evaluate the perceived effectiveness of these strategies in improving engagement and representation on the platform, based on creators' reflections.

Methods Used

-

Qualitative Interviews: Conducted five semi-structured interviews with Black LGBTQ+ creators

Explored how they perceive and respond to TikTok’s algorithmic biases. The goal was to gather in-depth personal experiences and strategies for combating content suppression.

-

Quantitative Survey: Designed and distributed an anonymous survey to Black LGBTQ+ creators

Collected data on content suppression, engagement rates, and adaptive strategies. The survey helped quantify the extent of the problem and provide evidence for the creators' claims. Descriptive statistics, including counts and percentages were used to summarize the survey data.

-

Thematic & Narrative Analysis: I applied thematic analysis to the interview data, identifying recurring themes

Used to provide deeper insights into the individual experiences of Black LGBTQ+ TikTok creators. Codes were generated iteratively, moving between data and analysis to develop themes that captured the complex realities of the participants' experiences with algorithmic bias on TikTok. Narrative analysis was used to understand how participants construct and convey their personal experiences.

Findings & Insights

Algorithmic Bias: Interviewees consistently reported feeling that TikTok’s algorithms disproportionately suppressed their content. Many creators shared that despite having high engagement with followers, their posts would be flagged or receive low visibility, leading them to believe that their identity—as both Black and LGBTQ+ —was a factor.

Algorithmic bias can silence marginalized voices, limiting creators' ability to reach audiences and reducing their chances of monetizing their content. Platforms need to acknowledge that the algorithm’s bias can reinforce systemic inequities and further marginalize creators.

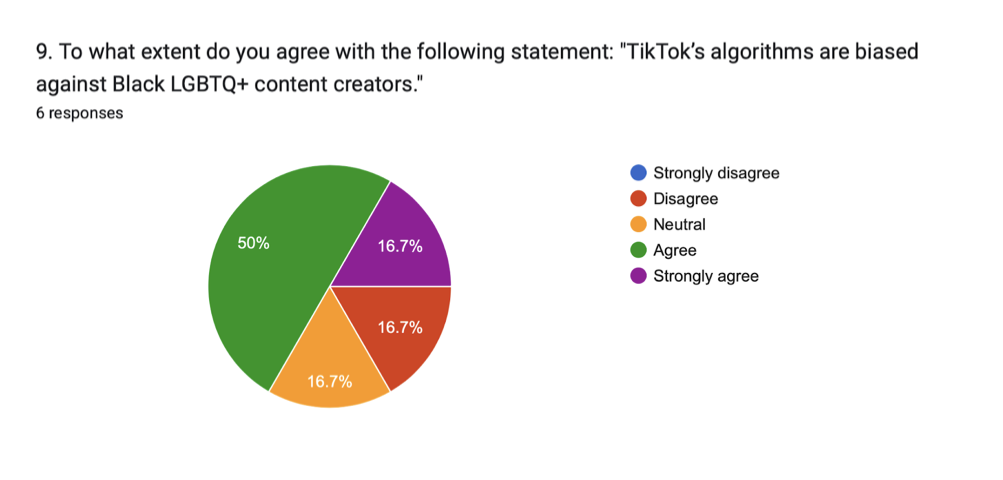

Emotional Impact: Many interviewees expressed feelings of frustration, isolation, and distrust toward the platform, as they believed their content was being targeted based on their identity. The survey results echoed this sentiment, with 66.7% of respondents believing their content was unfairly suppressed.

The emotional toll of being consistently sidelined by an opaque algorithm is significant. It not only affects creators’ mental health, but also limits their career growth in an industry increasingly reliant on social media visibility.

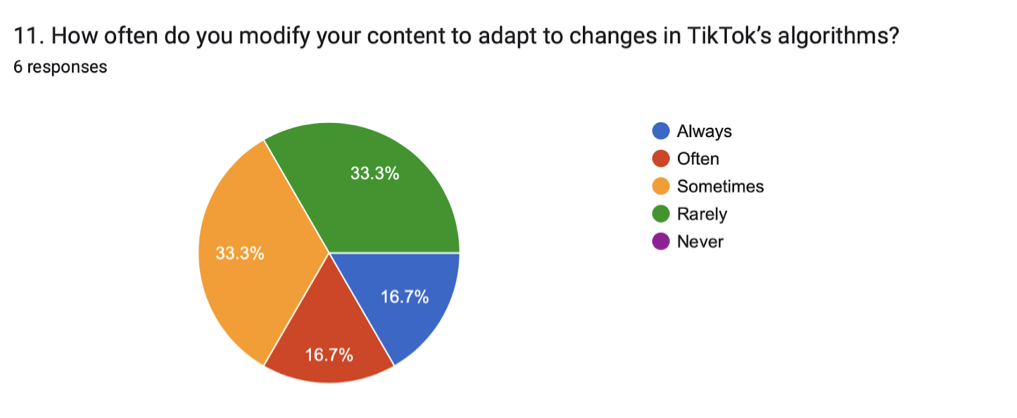

Adaptive Strategies: To counteract algorithmic bias, creators developed strategies such as using trending hashtags, posting at optimized times, and modifying their content to avoid specific keywords that they believed triggered suppression. Despite these efforts, creators often felt their strategies were temporary fixes to a systemic issue.

Creators shouldn’t have to adapt to algorithms that disproportionately target them. These workarounds are symptomatic of larger structural problems within the platform that need to be addressed with systemic changes.

Platform Inconsistencies: Despite TikTok’s public-facing campaigns celebrating diversity and inclusion, many creators felt that these initiatives did not reflect their day-to-day experiences. Creators expressed frustration at the gap between public messaging and real-world platform policies.

Platforms must reconcile their public commitments to inclusivity with the lived experiences of their users. Failing to do so risks alienating marginalized communities and perpetuating a lack of trust in the platform.

Unexpected Surprises

When I set out to conduct the survey, my goal was to gather quantitative data from Black LGBTQ+ TikTok creators that could be generalized to a larger group. I wanted to collect statistical evidence on their experiences with algorithmic bias and the strategies they used to counteract it. However, I ran into some challenges: online trolls.

I planned to distribute the survey widely online. But after I made a TikTok about my research, I started getting comments from homophobic and transphobic trolls. Worried about research sabotage, I was forced me to change my approach. To protect the integrity of the data, I shifted to a more controlled recruitment process, relying on direct outreach through trusted community networks instead of public posting. This limited the number of people who saw the survey, resulting in only six completed responses.

Although I had hoped for a larger sample size, the sensitive nature of the topic made it necessary to prioritize the quality and authenticity of the responses over quantity. The small number of participants means the survey results can’t be considered representative of the wider Black LGBTQ+ creator community, but they still offer valuable insights and reflect broader issues within research on marginalized online communities. This challenge taught me the importance of adapting research methods in response to unexpected obstacles while maintaining data integrity.

Reflection & Lessons Learned

This research revealed how powerful algorithms can perpetuate real-world inequalities, especially for creators who navigate multiple layers of marginalization. The project underscored the importance of algorithmic transparency and the need for platforms to take responsibility for the biases encoded into their systems. The creators I interviewed taught me that visibility isn’t just a technical challenge—it’s a social one. Moving forward, my goal is to develop UX solutions that center fairness, inclusivity, and accountability, ensuring that digital platforms can become more equitable for all users.